Selected AI Systems Demos

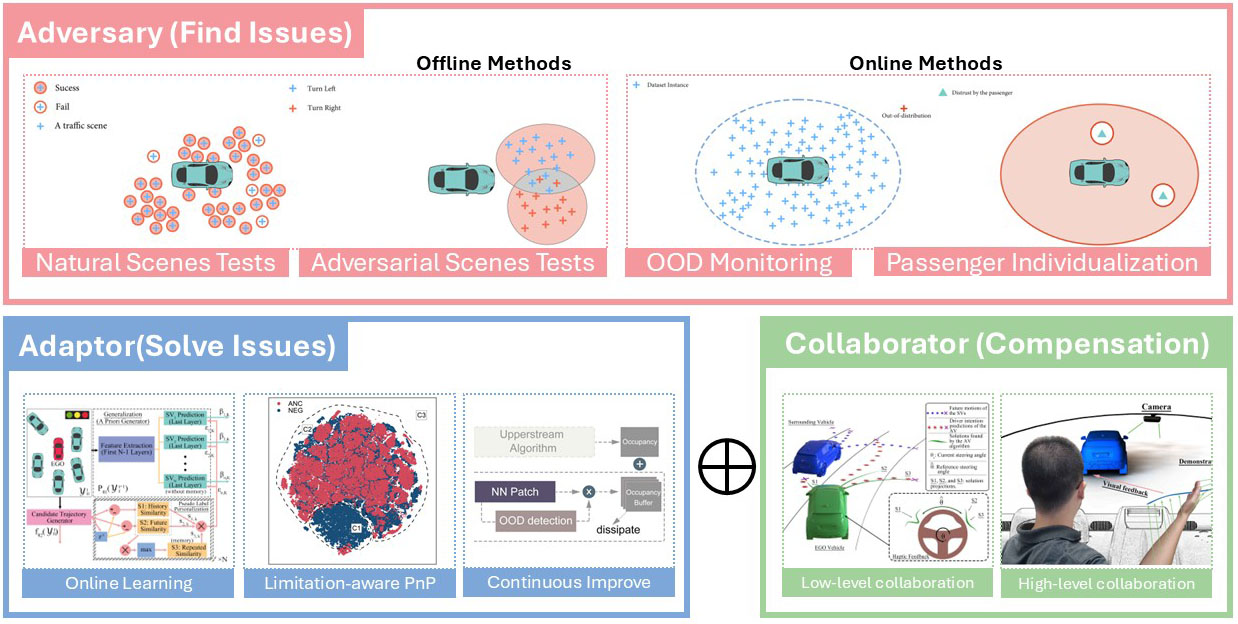

Overview:

A framework for designing and evaluating robust AI systems through adversarial testing, online adaptation, and human-in-the-loop collaboration. Illustrated here using autonomous driving as a safety-critical testbed, but applicable to broader AI and robotic systems.

Demo 1 — Natural Scene Generation (2022)

Problem: Generating explainable, human-like, and diverse traffic scenes for system testing.

Method: A Bayesian game-theoretic model of surrounding road users, validated via a Turing-test-style evaluation.

Signal: Enables naturalistic scene generation for testing beyond rule-based baselines such as IDM and MOBIL.

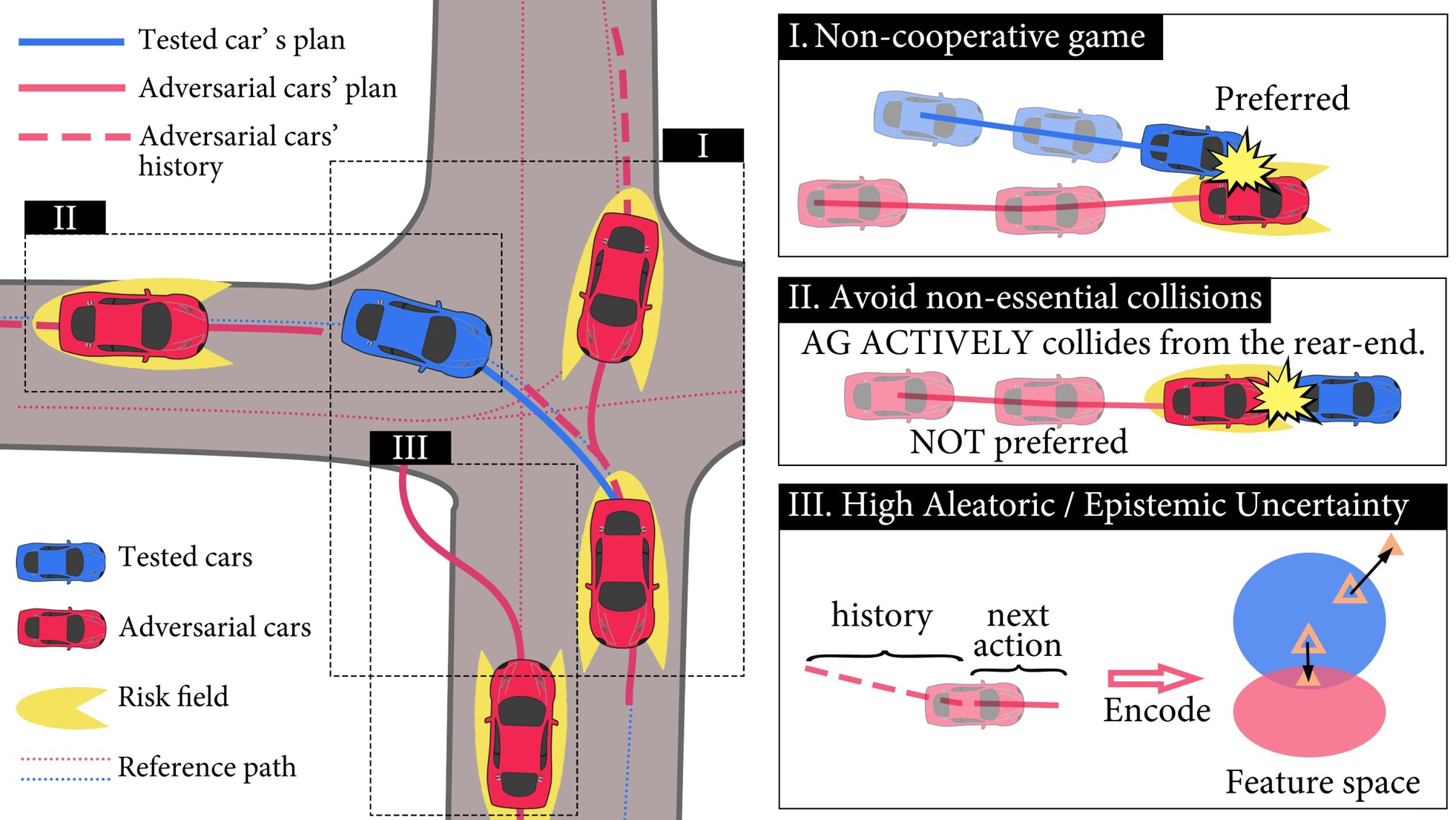

Demo 2 — Adversarial Scene Generation (2025)

Problem: AI vulnerabilities arising from rare, ambiguous, and high-risk conditions.

Method: Adversarial scene generation using game-theoretic modeling, encoder-level attacks, and asymmetric risk fields.

Signal: Efficiently exposes AI failure modes that are missed by standard evaluation.

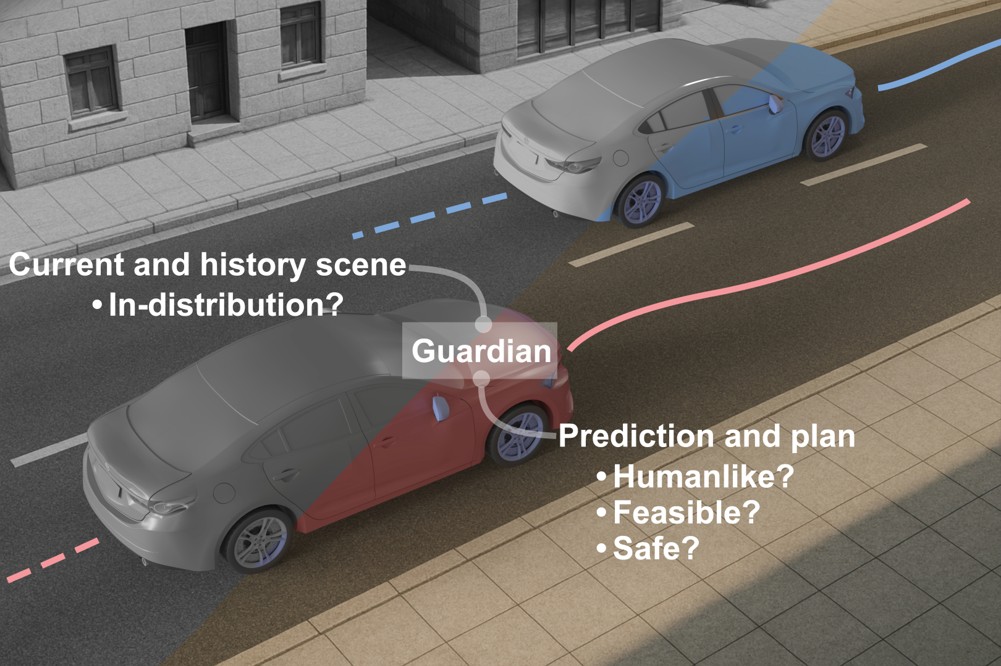

Demo 3 — Online Monitoring & OOD Detection (2025)

Problem: Runtime detection and monitoring of out-of-distribution (OOD) conditions.

Method: Counterfactual synthesis using diffusion models, GANs, and game-theoretic approaches.

Signal: Safeguards system reliability under safety-critical and distribution-shifted conditions.

Demo 4 — Subjective assessment (2025)

Problem: Ensuring user trust in AI systems during real-world operation.

Method: Human-in-the-loop subjective assessment using multimodal sensing.

Signal: Enables personalized system behavior with calibrated user trust.

Demo 5 — High-Level Human–Machine Interaction (2025)

Problem: Enabling human intervention and preference expression when autonomous systems face out-of-distribution conditions or personalization needs.

Method: High-level, natural human–machine interaction (e.g., gesture-based inputs).

Signal: Allows intuitive human guidance of autonomous systems in rare or ambiguous situations.